Account Data Review – PreĺAdac, екфзрги, 18552099549, 8148746286, 3237633355

The account data review for PreĺAdac, екфзрги, and the associated identifiers examines user interactions, transactions, and credentialed access to assess integrity within governance frameworks. The approach emphasizes privacy-preserving verification, traceable outcomes, and standardized access controls to enable auditable trails. Discrepancies are quantified with documented remediation and rollback plans, while ongoing monitoring supports regulatory alignment. The discussion will identify gaps and provide a structured path forward, leaving a clear incentive to continue as key decisions hinge on transparent reconciliation.

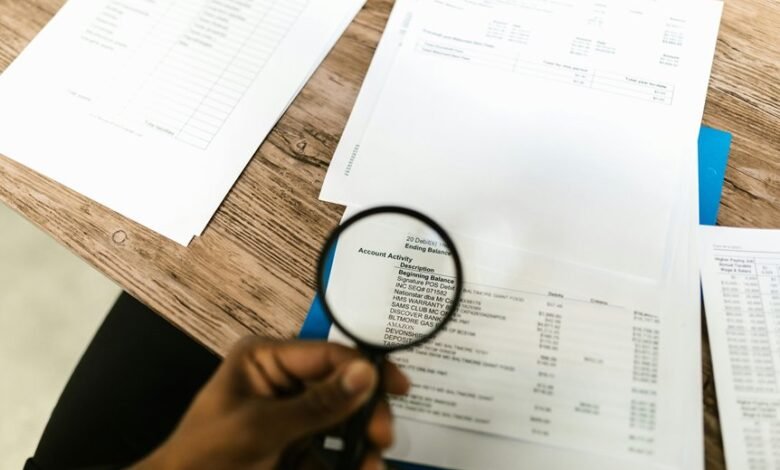

What the Account Data Means and Why It Matters

The account data serves as a structured record of user interactions, transactions, and credentialed access within the system. It enables monitoring, auditing, and risk assessment with disciplined transparency. The emphasis on account integrity ensures consistent behavior and trustworthiness across sessions. Effective data governance aligns collection, storage, and usage, supporting accountability, privacy, and freedom through responsible, verifiable data practices.

How to Verify Data Accuracy Without Breaching Privacy

How can data accuracy be verified without compromising privacy? Verification employs privacy safeguards and selective auditing to compare source attributes without exposing underlying data. Methods include hashed identifiers, differential privacy, and consent validation to confirm legitimacy of data processing. Independent reconcilers mitigate bias, while traceability documents checks and outcomes. The approach preserves freedom by minimizing data exposure and prioritizing transparent, policy-driven validation practices.

Practical Steps to Streamline Data Review and Access Controls

Practical steps to streamline data review and access controls build on the prior discussion of verification methods, translating privacy-preserving validation into actionable governance. The approach emphasizes clear ownership, standardized review cadences, and documented access requests. Data governance frameworks quantify risk, assign accountability, and align controls with policy. Access auditing accompanies automated alerts, ensuring traceability, reproducibility, and auditable decision trails without hindering operations.

Troubleshooting Common Data Discrepancies and Next Steps

In addressing data discrepancies, a structured diagnostic approach identifies root causes, quantifies impact, and prioritizes remediation steps. The process emphasizes data integrity through traceable corrections, with documented changes and rollback plans.

Next steps include reinforcing privacy safeguards, auditing access, and aligning with data governance policies.

Clear metrics, timely notifications, and continuous monitoring ensure sustained accuracy and accountability across systems.

Frequently Asked Questions

How Often Should We Audit Account Data for Accuracy?

Audits should occur quarterly to balance rigor and efficiency, ensuring ongoing accuracy. The analysis emphasizes data governance and data lineage, enabling traceability, accountability, and timely remediation within defined controls and documentation for sustained data quality.

What Are the Common Sources of Data Mismatches?

Data integrity hinges on matching sources: master records with transactional feeds, system clocks with event logs, user inputs with import files, external vendors with reconciliation files. Data governance safeguards consistency, while visibility reduces mismatch propagation and accelerates correction.

Who Should Have Final Approval for Data Changes?

The final approval for data changes should reside with a designated data governance lead or change approval board; they ensure compliance, traceability, and risk mitigation, while balancing operational needs and stakeholder accountability.

How Do We Measure the Impact of Data Review on Ops?

“A stitch in time saves nine.” The evaluation measures impact metrics, workflow optimization, anomaly resolution, and governance alignment, assessing how data review processes affect ops; it emphasizes repeatable, transparent practices, enabling freedom while maintaining disciplined governance and continuous improvement.

What Tools Succeed in Automating Anomaly Detection?

Automated anomaly detection tools succeed when they employ robust algorithms bias mitigation, continuously updating models and thresholds, and provide clear monitoring dashboards that expose drift, false positives, and confidence levels, supporting a disciplined, freedom-oriented operational ethos.

Conclusion

The review closes like a quiet ledger: a deliberate echo of prior audits guiding present scrutiny. By tracing provenance and masking sensitive details, it alludes to trusted stewards and unseen guards, suggesting that integrity rests in repeatable, auditable steps. As discrepancies are quantified and remediated, the process hints at a future where governance becomes routine memory—an inward mirror of current controls. In this calm, the disciplined cadence of verification endures, inviting ongoing confidence.